Microsoft’s highly anticipated Recall feature, designed to provide a "photographic memory" of a user’s digital activities on Copilot+ PCs, has been thrust into the spotlight once again, not for its innovative AI capabilities, but for a significant security vulnerability that exposes sensitive user data. Cybersecurity researcher Alex Hagenah has meticulously detailed a critical flaw in Recall’s architecture, demonstrating how an attacker with local access can exfiltrate screenshots, OCR’d text, and other metadata, even without elevated administrative privileges. Compounding the issue is Microsoft’s controversial classification of Hagenah’s findings as "not a vulnerability," a stance that has ignited fierce debate within the cybersecurity community and raised profound questions about the future of AI-powered features and user privacy.

Hagenah’s detailed analysis, published on the TotalRecall GitHub page, meticulously dissects the security shortcomings. He clarifies that the core Recall database itself, which stores the vast array of user activity snapshots, is "rock solid" in its encryption and security mechanisms, residing within a secure Virtualization-Based Security (VBS) enclave. The true Achilles’ heel, according to Hagenah, lies in the "delivery truck"—a distinct system process named AIXHost.exe. Once a user has successfully authenticated into Recall, the system passes the captured data to this AIXHost.exe process for further processing and AI analysis. Crucially, this particular process does not benefit from the same robust security protections as the fortified Recall database, creating a critical window of opportunity for attackers. Hagenah succinctly summarizes the problem: "The vault is solid. The delivery truck is not."

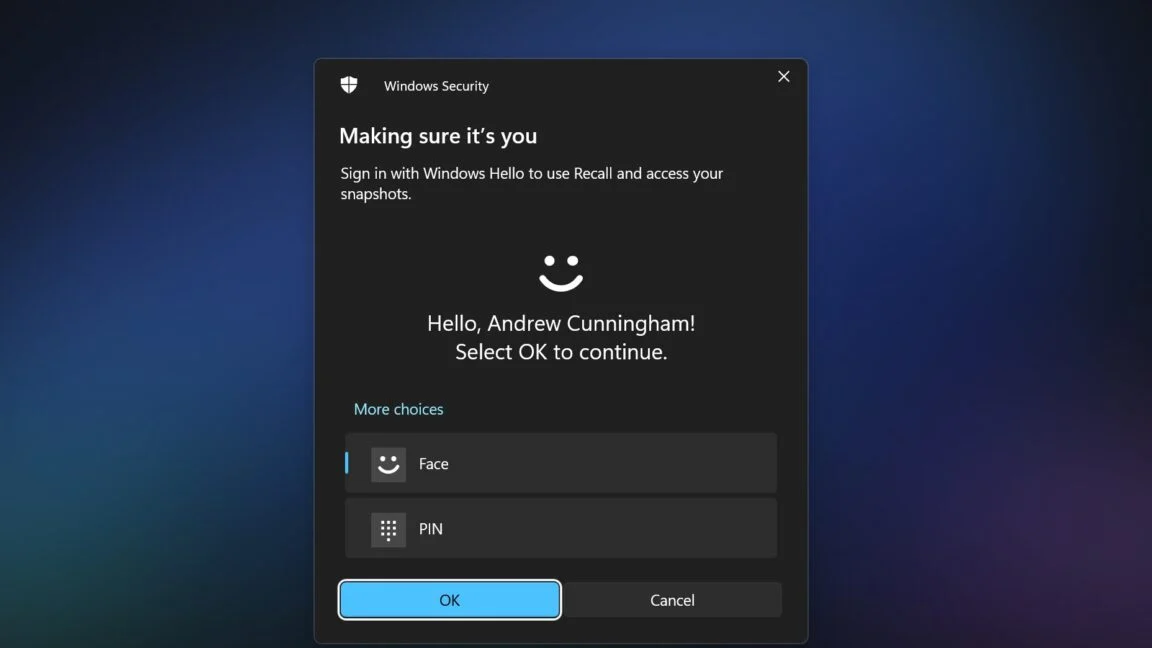

The TotalRecall Reloaded tool, developed by Hagenah to demonstrate this vulnerability, exploits this architectural weakness with alarming simplicity. It operates by injecting a Dynamic Link Library (DLL) file into the AIXHost.exe process. This injection can be executed without requiring administrator privileges, a critical detail that significantly lowers the bar for potential attackers. Once the DLL is injected, the tool passively waits in the background. The decisive moment occurs when the legitimate user opens Recall and authenticates themselves using Windows Hello, Microsoft’s biometric authentication system. At this juncture, as Recall begins sending data to the less-protected AIXHost.exe process, Hagenah’s tool intercepts the flow, capturing screenshots, the Optical Character Recognition (OCR) derived text from those images, and various other metadata that Recall has collected. Alarmingly, this data interception can persist even after the user has closed their active Recall session, allowing for continuous data exfiltration.

Hagenah emphasizes that his tool does not bypass the fundamental security provided by Windows Hello or the VBS enclave directly. Instead, it cleverly leverages the legitimate user’s authentication. "The VBS enclave won’t decrypt anything without Windows Hello," Hagenah writes. "The tool doesn’t bypass that. It makes the user do it, silently rides along when the user does it, or waits for the user to do it." This distinction is crucial, as it shifts the attack vector from a direct cryptographic bypass to an exploitation of a post-authentication, inter-process communication vulnerability. Furthermore, certain tasks, such as grabbing the most recent Recall screenshot, capturing specific metadata about the Recall database’s structure, and even deleting the user’s entire Recall database, can reportedly be performed without any Windows Hello authentication whatsoever, indicating even more severe design oversights. Once authenticated by the user, Hagenah confirms that the TotalRecall Reloaded tool gains access to both newly recorded information and historical data previously stored within the Recall database, effectively granting comprehensive access to a user’s digital past.

The Genesis of Recall: A Contentious Feature

To fully appreciate the gravity of Hagenah’s findings, it’s essential to contextualize Microsoft Recall within its broader launch and the surrounding controversies. Announced in May 2024 as a cornerstone feature of Microsoft’s new line of Copilot+ PCs, Recall was presented as an innovative AI capability designed to revolutionize personal computing. Its core function is to continuously capture snapshots of a user’s screen activity at regular intervals, process them using AI for context and searchability, and store this "photographic memory" locally. The stated goal was to allow users to easily search and retrieve anything they had seen or done on their PC, from specific lines of code in a programming environment to a forgotten detail on a webpage.

However, from the moment of its announcement, Recall ignited a firestorm of privacy concerns. Critics immediately dubbed it "spyware" and raised alarms about the vast amounts of sensitive personal, financial, and proprietary data it would inevitably collect. Cybersecurity experts, privacy advocates, and even mainstream media outlets voiced strong opposition, questioning the implications of a system that records virtually every interaction, potentially including passwords, private conversations, banking details, and confidential work documents. The initial implementation details, which suggested the feature might be enabled by default and the security measures around the local database were not immediately clear, only exacerbated these fears.

In response to the overwhelming backlash, Microsoft quickly moved to implement significant changes to Recall’s design before its public rollout. These adjustments included making Recall an opt-in feature, requiring Windows Hello authentication for its initial setup and access, and emphasizing that all data would be stored locally on the device and encrypted. Microsoft explicitly stated that data would not be sent to the cloud or shared with external entities. These concessions were intended to assuage privacy concerns and rebuild user trust. Ironically, Hagenah’s discovery demonstrates that even with these adjustments, a fundamental architectural flaw in how Recall processes and delivers data post-authentication undermines the intended security assurances.

A Chronology of Disclosure and Response

The timeline of Hagenah’s discovery and Microsoft’s response paints a complex picture of security research, responsible disclosure, and corporate classification.

- May 20, 2024: Microsoft officially announces Copilot+ PCs and the Recall feature, generating immediate excitement and simultaneous privacy concerns.

- May 21 – Early June 2024: Widespread media coverage and expert analysis highlight significant privacy risks associated with Recall’s continuous data capture.

- March 6, 2024: (Notably before the public announcement of Recall’s design changes in response to backlash, but after initial conceptual details were known or anticipated) Alex Hagenah, a seasoned cybersecurity researcher, reports his findings regarding the

AIXHost.exevulnerability to Microsoft’s Security Response Center (MSRC). This proactive reporting demonstrates responsible disclosure practices. - June 7, 2024: Microsoft publicly announces significant changes to Recall, making it opt-in, requiring Windows Hello, and emphasizing local, encrypted storage, directly addressing many of the initial privacy criticisms.

- April 3, 2024: Microsoft officially classifies Hagenah’s submission as "not a vulnerability." This decision comes roughly a month after the initial report and well before the public debut of Copilot+ PCs, indicating a thorough internal review by Microsoft’s security teams.

- Mid-June 2024 (approx.): Hagenah, having exhausted the responsible disclosure process and disagreeing with Microsoft’s classification, publicly discloses his findings and releases the TotalRecall Reloaded tool, bringing the issue to the attention of the broader cybersecurity community and the public.

This chronology highlights a divergence in perspective: Hagenah identifies a clear exploit pathway that compromises user data post-authentication, while Microsoft, after internal review, deems it not to meet their criteria for a "vulnerability." This discrepancy forms the crux of the ongoing debate.

Microsoft’s Official Stance: "Not a Vulnerability"

Microsoft’s decision to classify Hagenah’s discovery as "not a vulnerability" is perhaps the most contentious aspect of this entire episode. Typically, a vulnerability implies a flaw in software that can be exploited to compromise the integrity, confidentiality, or availability of a system or data. Microsoft’s position likely stems from a very specific interpretation of their security model.

Their argument, though not explicitly detailed in public statements regarding Hagenah’s specific report, generally aligns with the idea that the core security mechanisms (like the VBS enclave protecting the database and Windows Hello for authentication) are not directly bypassed. The attack relies on a user first authenticating with Windows Hello and then a local attacker exploiting a process that receives data after it has been legitimately decrypted for use by the system. In Microsoft’s view, this might fall under the umbrella of "local access risks" or "post-authentication scenarios" which are often considered outside the scope of a traditional remote code execution or privilege escalation vulnerability that might receive a critical rating. They might argue that if an attacker already has local access and the ability to inject code into user processes, the game is already significantly changed, and the risk model shifts.

However, this interpretation has been met with significant skepticism and strong disagreement from many cybersecurity experts. The fact that the DLL injection does not require administrator privileges is a critical differentiator. It means a malicious application running with standard user permissions, or even a sophisticated piece of malware already present on the system, could exploit this. Furthermore, the ability to exfiltrate highly sensitive data without requiring a direct bypass of the VBS enclave or Windows Hello, but rather by observing the "delivery truck," is precisely what many would define as a serious confidentiality vulnerability. The security community often views any unauthorized access to sensitive data, especially without elevated privileges, as a significant flaw, regardless of whether a core cryptographic component was directly broken.

Broader Implications: Privacy, Security, and Trust

Hagenah’s findings and Microsoft’s response carry profound implications across several domains:

- User Privacy and Data Confidentiality: The most immediate and concerning implication is the compromise of user privacy. Recall captures everything: private messages, financial details, passwords entered into forms, medical information, and proprietary business data. If this data can be intercepted by a local attacker (potentially malware) without admin rights, the promise of "local and secure" storage is severely undermined. Users who opted into Recall, trusting Microsoft’s assurances, now face the risk of having their entire digital history exposed.

- Enterprise Security Risks: In corporate environments, the implications are even more severe. A compromised employee workstation could become a conduit for industrial espionage or data breaches. Malware leveraging this technique could systematically exfiltrate sensitive company information from employees’ Copilot+ PCs, posing a significant threat to intellectual property and operational security.

- Erosion of Trust in AI Features: This incident further erodes user and enterprise trust in AI-powered features, particularly those that involve extensive data collection. Microsoft has been at the forefront of integrating AI into its products, but such security oversights, coupled with a dismissive response, can foster cynicism and reluctance to adopt new AI functionalities, especially on critical business systems.

- The Definition of "Vulnerability": The debate over whether this is a "vulnerability" highlights a fundamental disagreement between some vendor perspectives and the broader security research community. If a design choice allows for unauthorized data access without high privileges, many researchers would argue it is a vulnerability, irrespective of whether the core encryption mechanism was directly broken. This discrepancy can discourage responsible disclosure if researchers feel their findings are not being taken seriously.

- Future of Responsible Disclosure: Microsoft’s "not a vulnerability" stance could create a chilling effect on future responsible disclosures. If researchers invest time and effort to identify and report issues, only for them to be dismissed, they might be less inclined to follow established protocols, potentially leading to more zero-day exploits being sold on the black market or publicly disclosed without vendor remediation.

- Regulatory Scrutiny: Data privacy regulations like GDPR, CCPA, and others require robust protection of personal data. If sensitive user data is exposed due to such a flaw, even if Microsoft calls it "not a vulnerability," it could still trigger regulatory investigations and potential fines, particularly if the flaw is exploited in the wild.

Expert Perspectives and Industry Debate

The cybersecurity community has largely sided with Hagenah, viewing Microsoft’s classification as a misjudgment. Many experts argue that the critical factor is the ability to exfiltrate sensitive data without requiring administrator privileges. Kevin Beaumont, a prominent security researcher, notably criticized Microsoft’s initial implementation of Recall as "a disaster for infosec." Others have echoed concerns that Microsoft’s definition of a "vulnerability" is too narrow, failing to encompass significant design flaws that lead to privacy compromises.

The discussion often revolves around the concept of "attack surface." While the Recall database itself might be in a secure enclave, the AIXHost.exe process significantly expands the attack surface, creating an exploitable pathway that was not adequately secured. This highlights a common challenge in complex software systems: securing individual components is not enough; the security of inter-component communication and data flow is equally, if not more, critical.

Looking Ahead: User Action and Future Developments

Given Microsoft’s current stance, the onus largely falls on users to protect themselves. For those concerned about the privacy implications, the most straightforward advice is to disable Recall entirely on their Copilot+ PCs. Users who choose to enable it must understand the inherent risks and potentially consider it unsuitable for handling highly sensitive information. Enterprises deploying Copilot+ PCs will need to carefully assess their risk posture and potentially implement group policies to disable Recall across their fleets.

The future of Recall, and potentially other AI features involving extensive data capture, remains uncertain. Microsoft may face increasing pressure from users, the security community, and potentially regulators to re-evaluate its stance and implement additional safeguards around the AIXHost.exe process or the overall data flow. This incident serves as a stark reminder that while AI promises powerful new capabilities, the foundational principles of cybersecurity—confidentiality, integrity, and availability—must remain paramount. The "delivery truck" of data must be as secure as the "vault" itself, especially when that data encompasses the entirety of a user’s digital life.

Leave a Reply